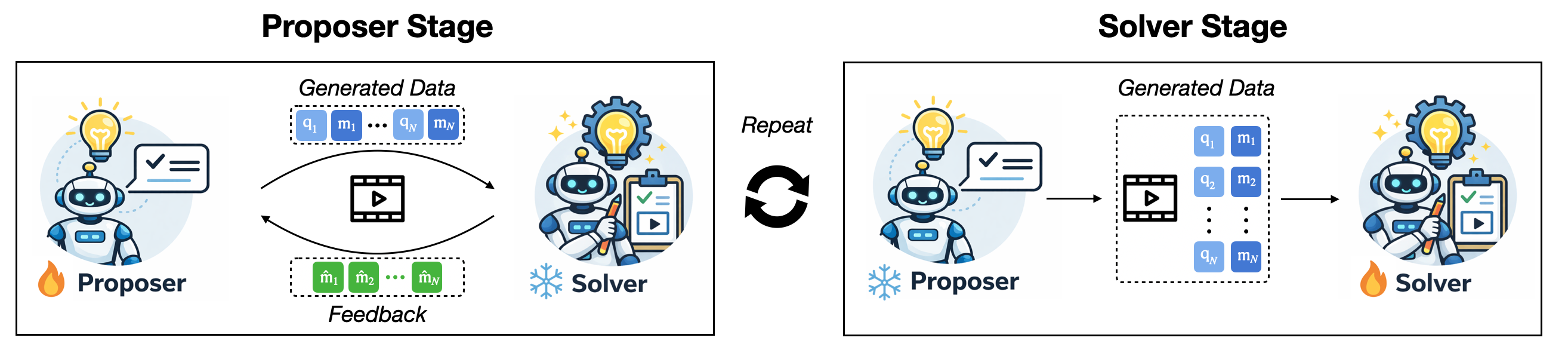

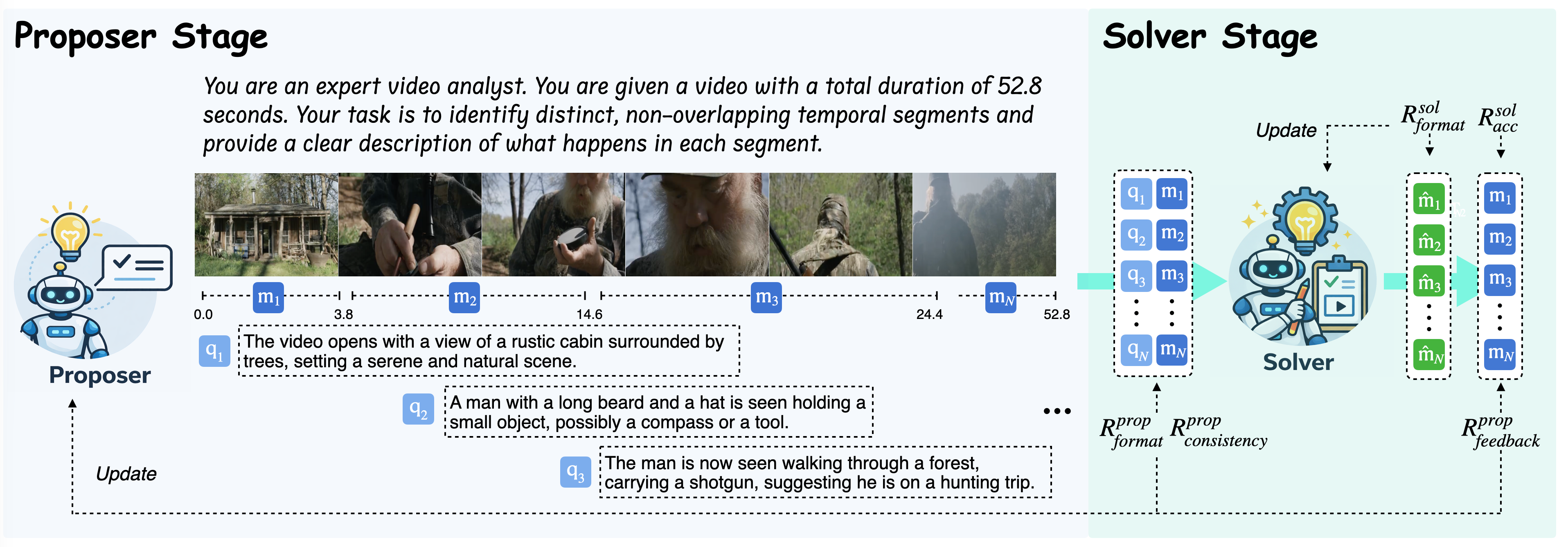

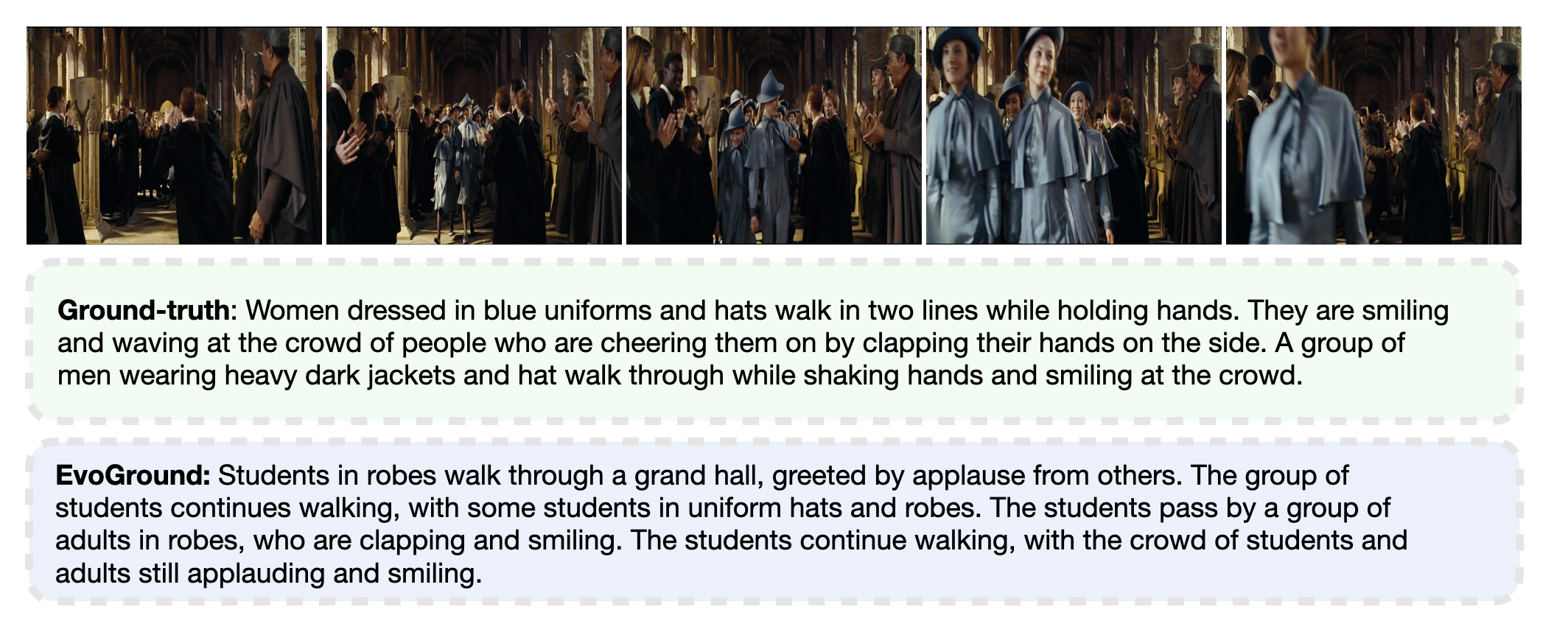

EvoGround consists of two agents: a proposer and a solver. The proposer identifies candidate temporal events from raw videos and generates corresponding query–moment pairs, while the solver learns to ground temporal moments using the generated data. Both agents are initialized from the same backbone and evolve solely through a self-reinforcing loop without any labeled data.

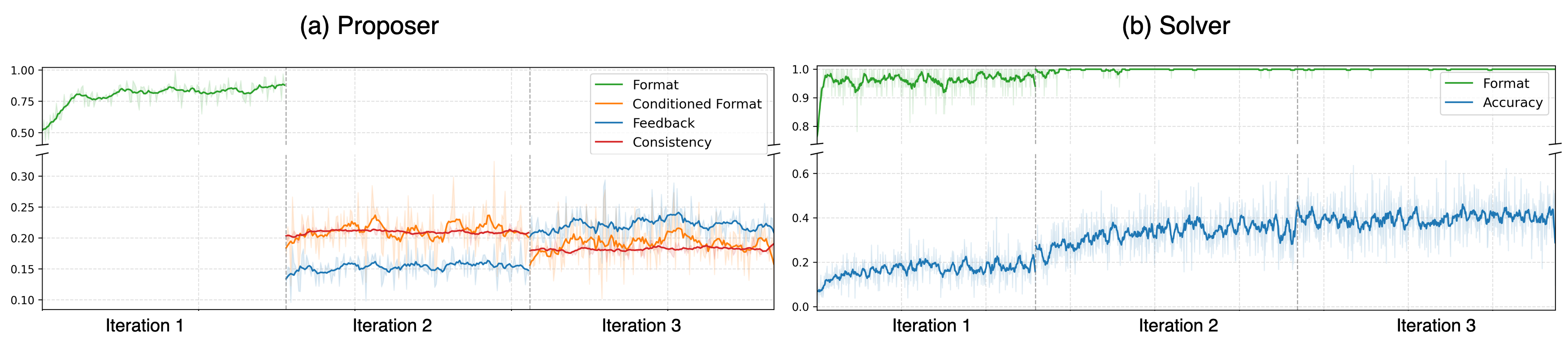

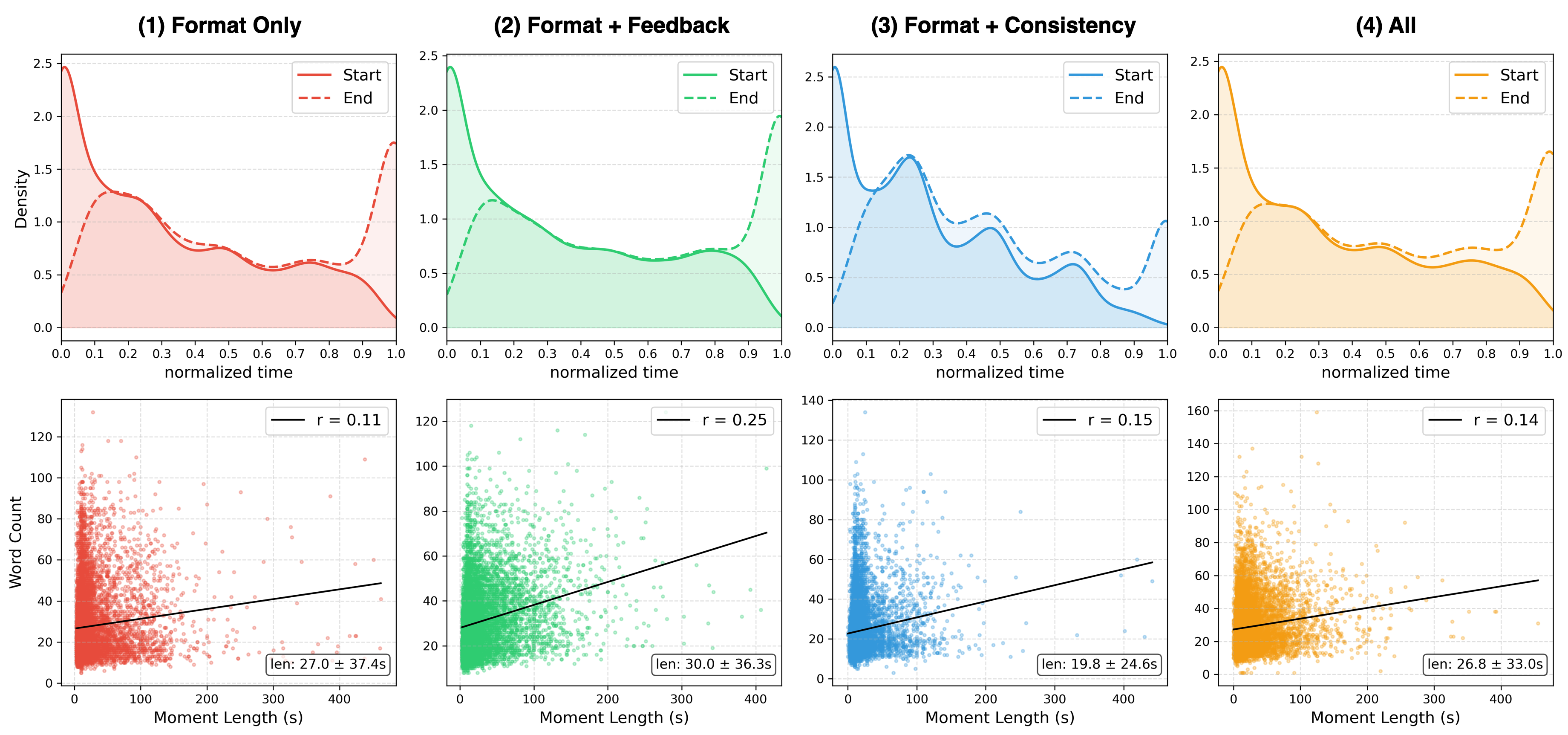

The proposer is guided by three reward criteria: validity (format reward), consistency (consistency reward), and solvability (feedback reward). The consistency reward measures intra-consistency — how coherently a query aligns with frames within its moment — and inter-consistency — how discriminatively the query matches its own moment relative to others. The feedback reward uses the solver's accuracy (measured by timestamp-aware IoU) as a signal of whether generated pairs are learnable.

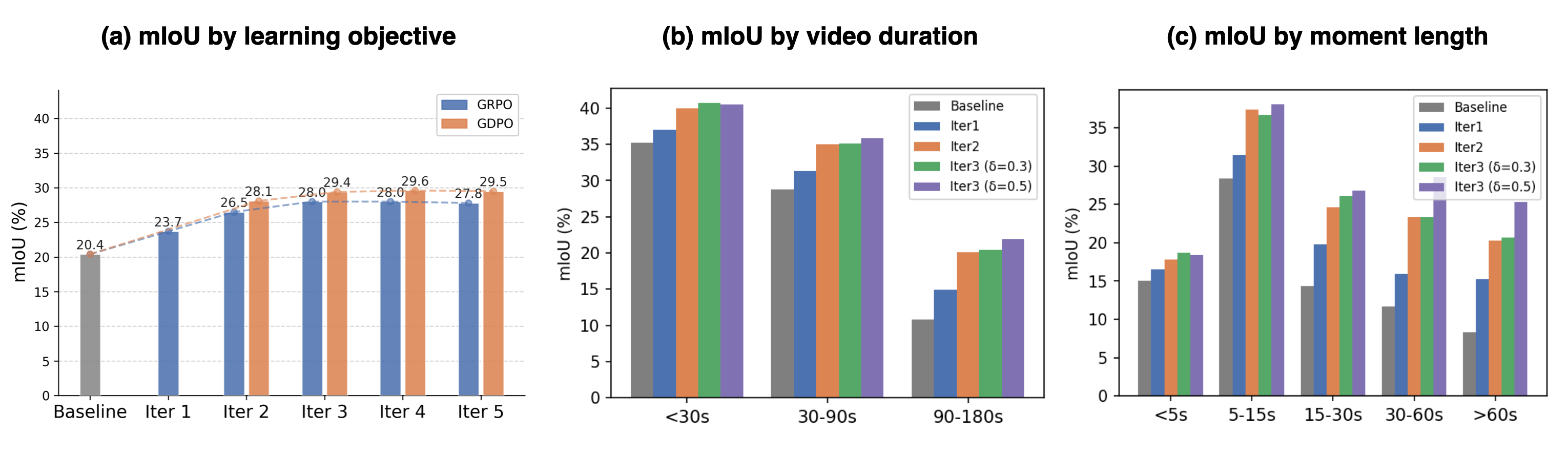

The solver is trained on proposer-generated query–moment pairs using a format reward and an accuracy reward (tIoU). EvoGround adopts GDPO as its RL optimizer and a curriculum design that progressively increases the solvability threshold across iterations, shifting focus from coarse matches toward more precise temporal alignments.